Last Updated on 6 months by Francis

When it comes to thermal imaging and infrared technology, there is often confusion surrounding the terms. Many people wonder, “Is infrared and thermal the same?” The answer is no, they are not the same. While they are related, they refer to different concepts.

In this article, we will explore the key differences between infrared and thermal, providing a comprehensive explanation of these terms. By the end of this article, you will have a better understanding of the distinctions between these two technologies.

Contents

Key Takeaways:

- Infrared technology and thermal imaging are related but distinct concepts.

- Understanding their differences can help individuals make informed decisions about their use.

- Throughout this article, we will explore the definitions, differences, similarities, and practical applications of infrared and thermal.

Understanding Infrared Technology

Before comparing infrared and thermal imaging, it’s essential to understand infrared technology. Infrared radiation (IR) is a type of electromagnetic radiation that has longer wavelengths than visible light. This radiation is invisible to the human eye, but it can be detected by specialized equipment such as infrared cameras.

Infrared cameras and imaging systems use IR radiation to create images of objects. These cameras detect and measure the heat emitted by objects in their field of view and convert it into an image. The resulting image shows the surface temperature distribution of the object, with warmer areas appearing in lighter colors and cooler areas in darker colors.

There are different types of infrared cameras available, such as cooled and uncooled cameras. Cooled cameras use cryogenic cooling to reduce the temperature of the camera’s sensor, allowing it to detect even the weakest IR signals. Uncooled cameras, on the other hand, use a thermoelectric cooler to maintain a stable temperature for the sensor.

Infrared cameras have various applications, including surveillance, monitoring industrial processes, and medical imaging. They are particularly useful in situations where detecting temperature differences is critical, such as in firefighting and search-and-rescue operations.

What is Infrared Radiation?

Infrared radiation is a type of electromagnetic radiation that has longer wavelengths than visible light. It accounts for about half of the total energy emitted by the Sun and is also emitted by objects on Earth. IR radiation is divided into three categories: near-infrared (NIR), mid-infrared (MIR), and far-infrared (FIR). Each category has a specific range of wavelengths and corresponding applications.

How Do Infrared Cameras Work?

Infrared cameras work by detecting the heat emitted by objects in their field of view. They do this using a specialized sensor that absorbs IR radiation and converts it into an electrical signal. The camera’s software then processes this signal to create an image that shows the temperature distribution of the object.

“Infrared cameras are particularly useful in situations where detecting temperature differences is critical, such as in firefighting and search-and-rescue operations.”

An Introduction to Thermal Imaging

Infrared technology is used for a variety of applications, including thermal imaging. But what is thermal imaging, exactly?

Thermal imaging is a method of capturing the infrared radiation emitted by objects. This radiation is translated into a visual image, revealing the temperature distribution of the objects in the image.

Thermal imaging is particularly useful in situations where traditional visual inspection methods are not effective. For example, thermal imaging can be used to detect insulation leaks, locate hot spots in electrical systems, and identify overheating components in machinery.

Thermal imaging also has applications in the medical field, where it is used to detect temperature changes in the body that may indicate injuries or illnesses. This technology has proven especially valuable in the early detection of breast cancer and identifying inflammation in the body.

Unlike some other imaging modalities, thermal imaging is non-invasive and does not expose the patient to ionizing radiation, making it a safe and effective diagnostic tool.

So, while infrared technology is a broad term that encompasses many different applications, thermal imaging specifically refers to the use of infrared radiation to capture temperature information. Understanding the differences between these applications can help you determine which technology is best suited for your needs.

Key Differences Between Infrared and Thermal

While infrared and thermal imaging may seem similar at first glance, there are several key differences between the two technologies. Understanding these distinctions is essential for anyone looking to use these tools effectively.

Infrared vs. Thermal

The most significant difference between infrared and thermal imaging lies in their applications. Infrared technology is used for a wide range of applications, from measuring temperature to detecting motion and even analyzing the composition of materials. Thermal imaging, on the other hand, is primarily used for identifying temperature differences in objects and environments.

Another difference is the way each technology operates. Infrared cameras detect and measure the radiation emitted by objects in the infrared spectrum. This radiation reflects the temperature of the object and provides insight into its properties. Thermal cameras, on the other hand, detect the heat energy emitted by objects and convert it into a thermal image that shows the temperature variations in a scene.

Other Differences

Here are some more specific differences between infrared and thermal technology:

| Aspect | Infrared | Thermal |

|---|---|---|

| Wavelength Range | 700 nm to 1 mm | 8 to 14 microns |

| Resolution | Higher | Lower |

| Image Clarity | Clearer | Blurrier |

| Cost | Lower | Higher |

As you can see, there are several differences in how infrared and thermal imaging work and what they are used for. Understanding these distinctions is essential for anyone looking to choose between the two technologies or use them effectively in their work.

Similarities Between Infrared and Thermal

While there are clear differences between infrared and thermal imaging, there are also some similarities that are worth noting. Both technologies rely on capturing heat radiation to create images, making them incredibly useful for detecting temperature variations and hotspots.

Additionally, both infrared and thermal imaging have a wide range of applications in various industries. For example, they are commonly used in electrical and mechanical inspections to identify potential issues before they become major problems.

Moreover, infrared and thermal imaging can both be used for surveillance and security purposes. They can detect intruders or hidden objects by detecting their body heat and thermal radiation.

Finally, both technologies offer non-contact temperature measurement, making them ideal for use in situations where direct contact is either impossible or undesirable. For instance, they can be used to measure the temperature of food products without the risk of contamination.

“Both technologies rely on capturing heat radiation to create images, making them incredibly useful for detecting temperature variations and hotspots.”

An Introduction to the Applications of Infrared Technology

To better understand the practical uses of infrared technology, it’s important to break it down into specific applications. These applications can be seen in industries such as:

- Medical: Infrared technology is used in medical imaging to identify abnormalities and diagnose diseases, such as breast cancer or musculoskeletal injuries.

- Industrial: Infrared technology is used to detect heat signatures in machinery, identify potential issues, and prevent system failures. It is also used in material testing, such as identifying defects in semiconductors.

- Environmental: Infrared technology can be used to detect and monitor environmental changes, such as temperature fluctuations in oceans, the impact of climate change on landscapes, and volcanic activity.

The ability of infrared technology to identify and interpret data from heat sources has made it an essential tool in many fields. Its non-intrusive nature makes it a valuable asset in medicine, while its accuracy and efficiency have made it a crucial component of many industrial processes.

Furthermore, the ability of infrared technology to detect temperature changes in the environment has made it a vital tool in monitoring and predicting environmental phenomena. With these applications in mind, it’s clear that infrared technology is a powerful tool with far-reaching uses.

An Introduction to Thermal Imaging

Thermal imaging is a technology that enables the visualization and capture of infrared radiation. In simpler terms, it allows us to see the temperature profile of an object or environment.

Thermal imaging is widely used in both scientific and commercial applications. It offers a non-destructive, non-contact method of measuring temperature and can be used in a variety of fields, including aerospace, automotive, and medical industries.

One of the key advantages of thermal imaging is its ability to detect temperature variations, even in total darkness. This is because all objects emit some level of infrared radiation, regardless of whether they are visible to the human eye. By detecting and analyzing this radiation, thermal imaging can create images that reveal hidden patterns and temperature profiles.

Thermal imaging can also be used to identify potential problems in machinery and equipment by detecting hot spots or abnormal temperature patterns. This allows for preventative maintenance, reducing the risk of equipment failure and downtime.

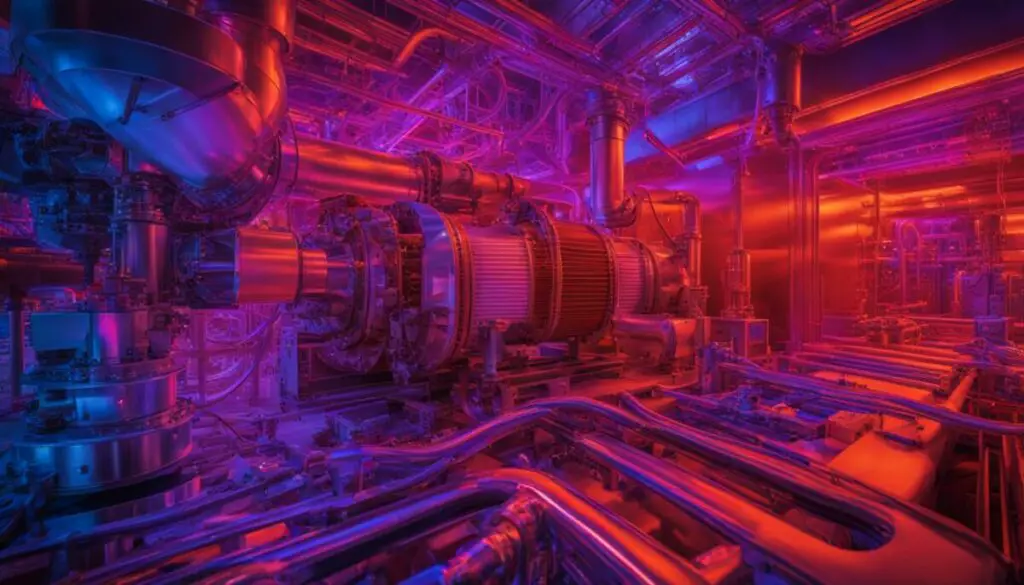

Thermal imaging can produce images like the one above, which shows the temperature distribution of a heat exchange unit. The image was created using a thermal imaging camera, which captures the emitted infrared radiation from the surface of the object.

Choosing Between Infrared and Thermal

Now that we have explored the differences between infrared and thermal, it’s important to consider which technology is best suited for your needs.

If you are looking for a non-contact temperature measurement, both infrared and thermal imaging can provide accurate results. However, if you need a more detailed and comprehensive temperature analysis, thermal imaging may be the better option as it can detect temperature changes across large areas and identify hotspots more effectively.

Another factor to consider is cost. Infrared thermometers are generally less expensive than thermal imaging cameras, making them a more accessible option for those on a budget.

However, if you require precise temperature measurement, thermal imaging cameras may be worth the investment. They provide extensive temperature data and can detect gradual temperature changes over time, making them useful for preventative maintenance and identifying potential issues before they become major problems.

Ultimately, the choice between infrared and thermal imaging will depend on your specific needs and budget. Consider the application, level of precision required, and potential cost before making your decision.

Conclusion

After exploring the question, “Is infrared and thermal the same?” in-depth, it is clear that these two technologies are distinct from each other.

We have established that infrared technology uses infrared radiation to detect and measure temperature, while thermal imaging uses the heat emitted by objects to create an image.

Although they have different applications, it is important to note that both infrared and thermal technologies have their place in various industries.

Understanding the differences between infrared and thermal is crucial for individuals who are faced with the decision of choosing one or the other for their specific needs.

Overall, we hope that this article has helped shed light on the differences and similarities between infrared and thermal. By doing so, we aim to assist individuals in making informed decisions regarding their use.

Thank you for reading!

FAQ

Is infrared and thermal the same?

No, infrared and thermal are not the same. While both are related to heat, they refer to different aspects of heat measurement and imaging.

What is infrared technology?

Infrared technology refers to the use of infrared radiation for various purposes, such as temperature measurement, remote sensing, and communication. It utilizes the heat emitted by objects to gather information.

What is thermal imaging?

Thermal imaging is a technique that uses thermal energy or heat to create images of objects or environments. It visualizes the temperature differences in a scene to identify patterns and anomalies.

What are the key differences between infrared and thermal?

The key differences between infrared and thermal lie in their focus and applications. Infrared technology encompasses a wider range of applications beyond thermal imaging, while thermal imaging specifically deals with capturing thermal energy and creating images based on temperature variations.

What are the similarities between infrared and thermal?

Both infrared and thermal involve the use of heat and can provide important insights into the thermal characteristics of objects or environments. They also play significant roles in various scientific and industrial applications.

What are some applications of infrared technology?

Infrared technology finds applications in fields like temperature measurement, night vision systems, security and surveillance, medical imaging, and even in consumer electronics like TVs and remote controls.

What are some applications of thermal imaging?

Thermal imaging is used in a wide range of fields including building inspections, electrical inspections, firefighting, search and rescue operations, industrial maintenance, and wildlife monitoring, among others.

How do I choose between infrared and thermal technology?

When deciding between infrared and thermal technology, it is important to consider the specific application or purpose. Assessing factors like required resolution, accuracy, cost, and environmental conditions can help determine the most suitable option.